Introduction

In a previous post (i.e., Creating a corpus for Named Entity Recognition) we learnt how to create a specific corpus of tweets. We queried TMDB API for popular films and TV shows and stored their titles. Then, we searched for tweets containing those titles and output a JSONL corpus with the results. In this post, we will learn how to tag the titles in the corpus to create an annotated corpus that will serve to concoct a language model for Named Entity Recognition(NER).

For this purpose, we will be using Doccano, a web browser open source tagger. It can be run either locally or online. This time we will be running it on an Heroku free web server.

Prerequisites

- Basic knowledge of Python

- Read first entry on Named Entity Recognition

1 Heroku

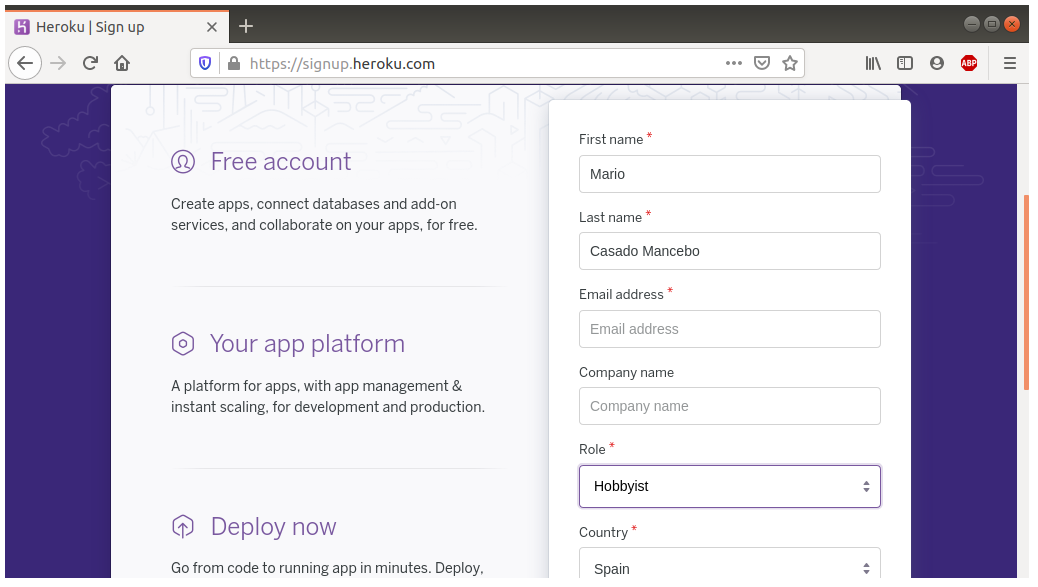

1.1 Create an Heroku account

First of all, we will need to sign up on Heroku to be able to run web services on their servers. Go to signup.heroku.com and fill in the registration form. Once you get a confirmation email, head to login page and enter your dashboard.

1.2 Deploy a Doccano instance to Heroku

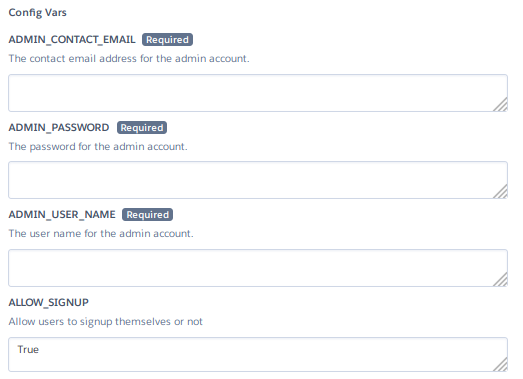

Without closing the dashboard, open a new tab on the one click Heroku deployment menu. You will see a configuration form to set up Doccano.

It is recommended to leave empty the app name so you get a random ID from Heroku. As for the user and password, make sure not to use admin and password as ADMIN_USER and ADMIN_PASSWORD values. If you plan to use Doccano on your own, set ALLOW_SIGNUP to False so it doesn’t allow others to sign up. Once it’s filled, click Deploy.

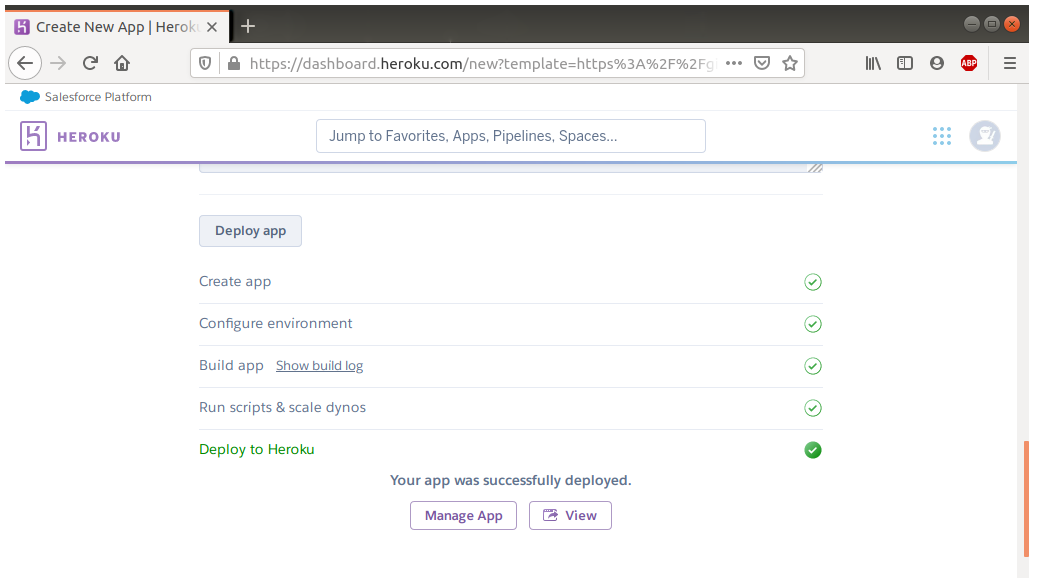

Allow some minutes to complete the deployment. Once it’s finished, click on Manage App to enter the application dashboard and click Open app to enter your Doccano instance.

2 Doccano

2.1 Importing the corpus

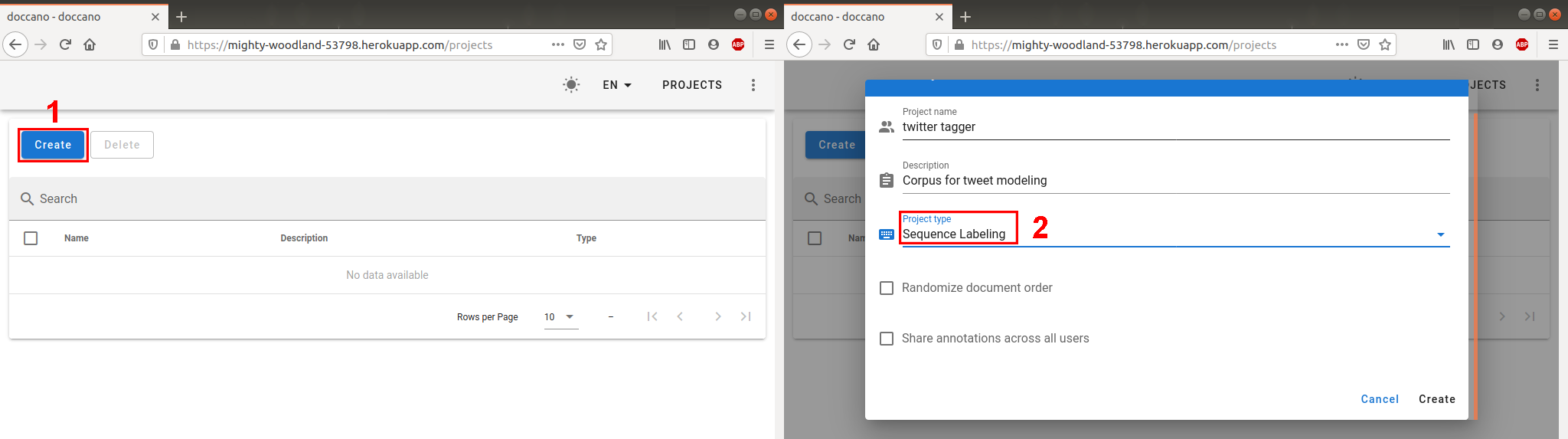

Firstly, you will have to login (there’s a button at the top right corner) with the user/password you set on Heroku configuration. You will have to create a new project by clicking Create (1) and filling the form. Make sure to set the project type as Sequence labeling (2).

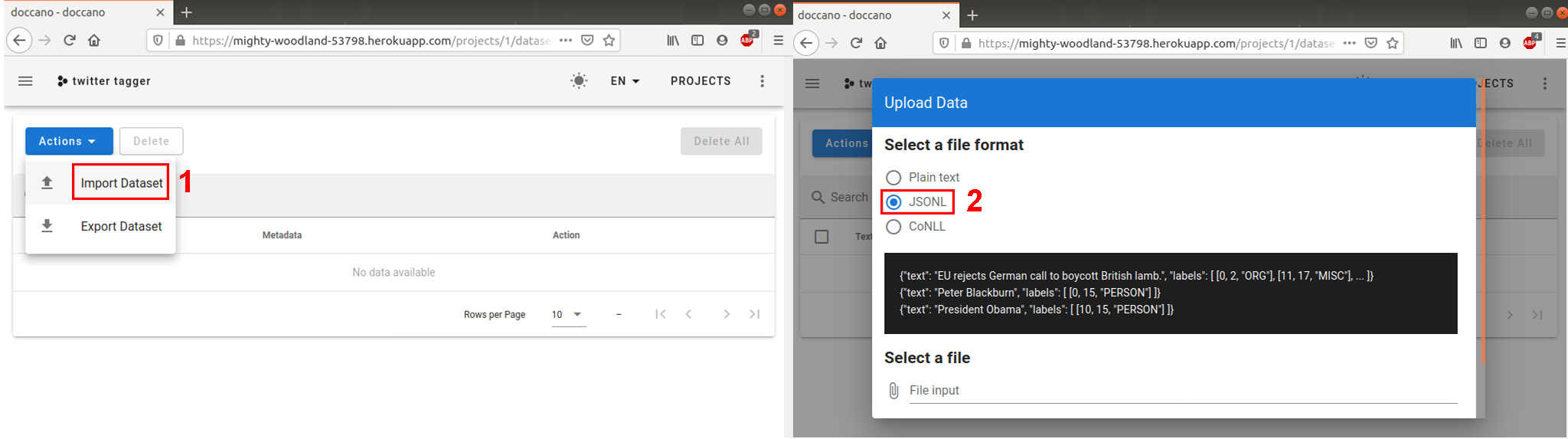

Then, you will have the option to see some quick tutorials on how to work with Doccano. For the moment, expand the menu by click the three lines on the top left corner and enter Datasets menu, expand the Actions button and activate the Import Dataset option (1).

In the previous post of this collection (Creating a corpus for Named Entity Recognition) we left a JSONL corpus ready, so we will check JSONL option in the form (2) and attach the corpus file to the file input field. We will see each entry in a row ready to be annotated.

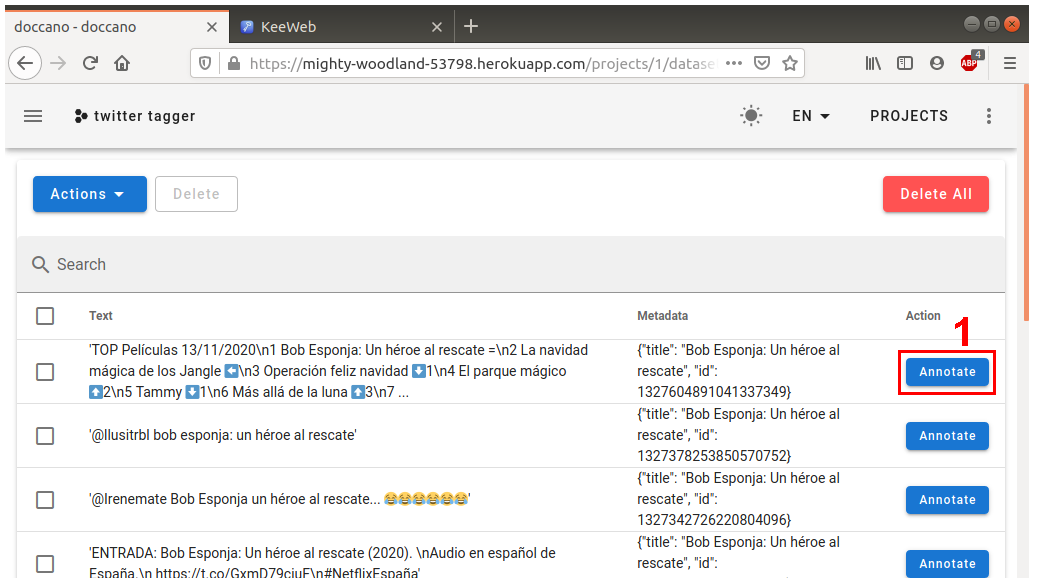

To start working you can either click Annotate button next to the first row (1) in the dashboard or open the left side menu and click Start Annotation.

2.2 Creating the tags

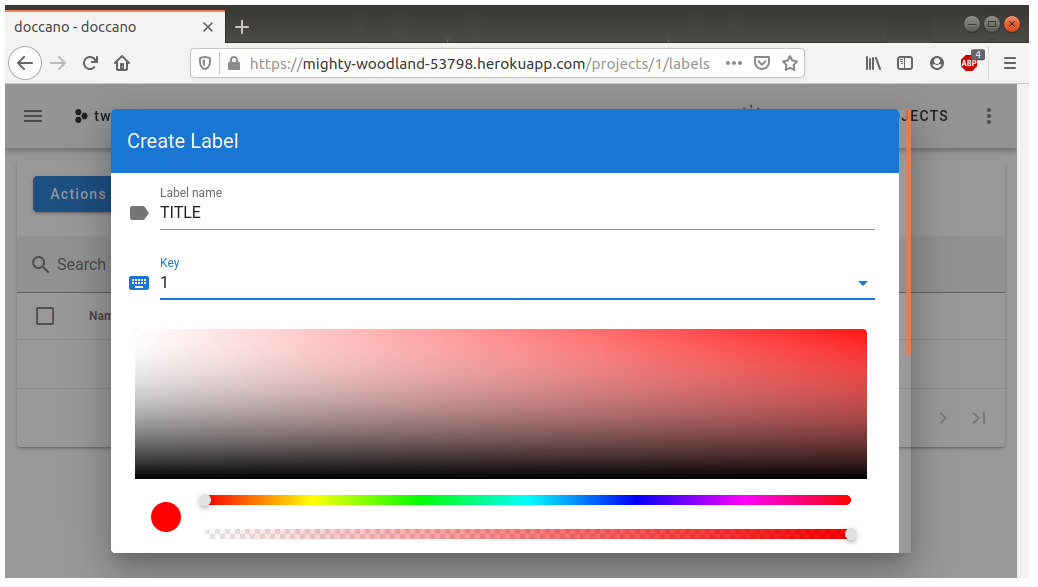

In order to start setting the tags in each tweet, we will firstly need to define any. Open the left side menu and enter the labels dashboard. Create a new label from the Actions button and name it Title. You can choose a number as shortcut and the color of the tag.

2.3 Annotating the corpus

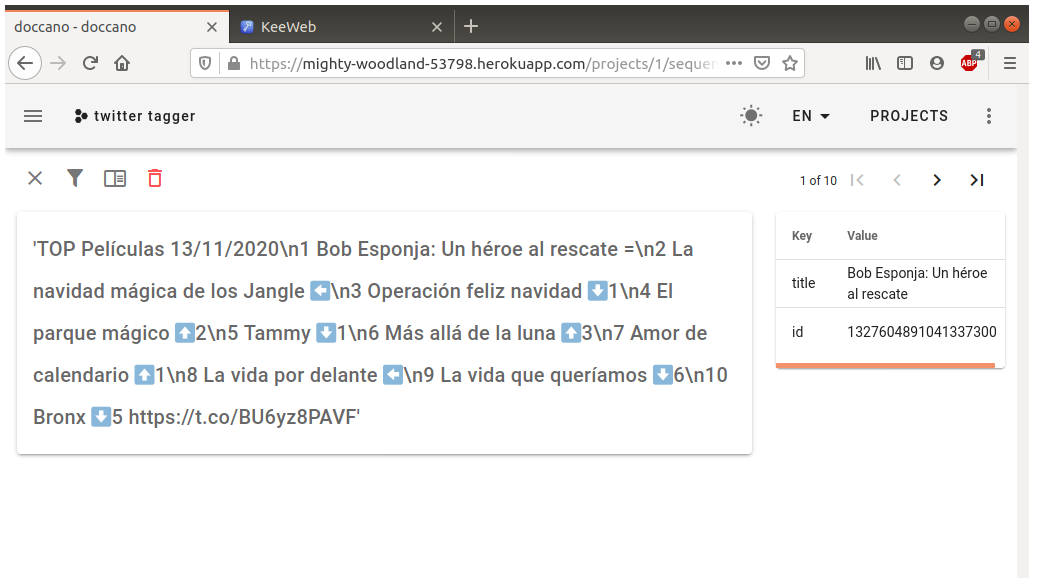

On the annotating interface we can see the text from the corpus to be annotated next to the metadata we included: an ID to keep track of the entries through the corpus and the title that led to the selection of the tweet.

To tag a title, highlight the text. A prompt will appear with the available tags (1). Click on a label and the tag will show up. Once the current entry is ready, click on the X at the top left corner (2) to mark the tweet as ready.

When the whole corpus is tagged, head to the dataset dashboard and click Export Dataset on the Actions menu. You will get a JSONL file that will serve to create a NER language model on spaCy.

Conclusion

In this post we have got to know two softwares. Heroku is a web servicies platform that allows to easily deploy websites for free. Doccano is an open source application that runs as a web service and is useful to tag corpora. We have used these two for setting up a website to tag our linguistic corpus of tweets containing TV shows and movie titles. Finally, after the work is done, we have exported our ready corpus.

In the next post we will learn how to use the tagged corpus to create a NER language model using Python’s spaCy library.